What Every Powerful AI Agent Should Include

What should every powerful AI Agent include?

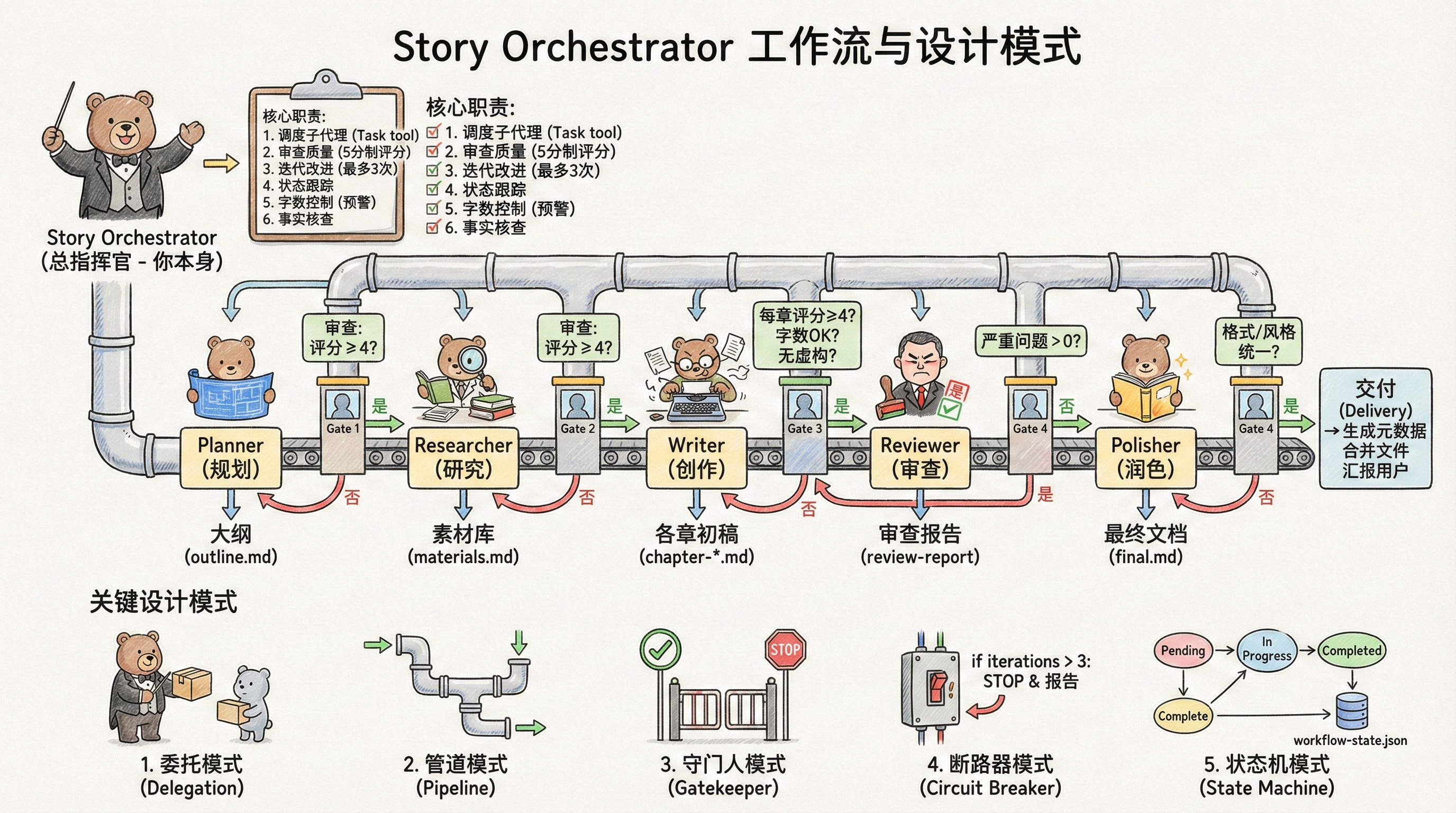

Orchestrator-Workers for task delegation, Pipeline for orchestration, Circuit Breaker for fault tolerance, and State Machine for state management.

One thing OpenCode does particularly well is its ability to drive three models simultaneously for code design and implementation — each model has its own strengths, and they complement each other effectively.

This sounds complex, but it’s actually quite simple: just imagine the three models as three SubAgents, each using a different API to connect to the underlying model, with a main Agent dedicated to orchestrating these three SubAgents to accomplish the task.

The trickier part is handling the protocol differences between the three providers, but since many wrapper apps have already implemented this feature, it can’t be too complicated — many open-source frameworks even have it built-in.

This is actually standard practice for many Agent products, they just don’t advertise it. Products that don’t let users choose models almost certainly use multiple models working in concert behind the scenes.

Recently, I’ve been building an auto story-writing Skill (purely for learning — all my articles are personally written). It’s a complete Agent, wrapped in a Skill layer so I can leverage Claude Code’s underlying capabilities without actually developing an Agent via SDK.

Let me share the four design patterns I commonly use when designing Agents, using this Skill as a practical example.

1. Delegation Pattern & Evaluator-Optimizer

What we call “delegation” is the Orchestrator-Workers pattern — a single orchestrator Agent drives all SubAgents to execute tasks, with the orchestrator responsible only for task distribution and review.

I divide SubAgents into two categories: Plan Agent and Workers Agent. Plan Agent handles task planning; after review by the orchestrator, tasks are handed off to Workers Agent for execution.

You can also extract a separate Evaluator Agent specifically for evaluating SubAgent results, but I typically have the orchestrator Agent handle evaluation directly.

One advantage of this approach: each SubAgent has its own independent context, preventing context explosion (a degradation in LLM performance caused by overly long conversation history).

2. Pipeline

The Pipeline pattern constructs a workflow that orchestrates all SubAgent actions, passing the final output of one Agent as the input to the next.

In my Skill, the workflow is: task planning → research material database → initial chapter drafts → document review (accuracy & punctuation) → polishing.

At each stage, there’s a review mechanism to verify whether the current step meets quality standards. The reviewer is the orchestrator Agent mentioned earlier.

The benefit: if a step fails, there’s no need to start over. Simply direct the responsible Agent to refine and resubmit that specific step.

For instance, during chapter draft writing, if the orchestrator Agent discovers that the first two chapters exceed our planned word count, it will raise this concern during review and have the responsible Agent optimize and revise. Previous completed tasks — like planning and material research — don’t need to be repeated.

3. Circuit Breaker Pattern

When the orchestrator Agent evaluates each SubAgent’s results, it maintains an iteration counter.

Each retry request increments the counter by 1. Once a threshold is exceeded, the orchestrator pauses all scheduling and reports the current impasse to the user, waiting for their input.

4. State Machine Pattern

The state machine stores the current task execution results of all Agents, intermediate task results, and intermediate variables used during execution (such as iteration counts).

It also serves a function similar to a Hook: as mentioned earlier, after each task completes, it undergoes review by the orchestrator Agent. The review conditions are stored in the state machine. Once review passes, the orchestrator Agent updates the state within the state machine.

Think of it as a sophisticated TodoList, with only the orchestrator Agent able to modify its state.

Additionally, it handles state recovery: if Claude Code crashes unexpectedly, after restart the system can restore the original context from records in the state machine, and the orchestrator Agent continues orchestrating to complete the remaining tasks.

These patterns are just what I commonly use — you can add other patterns as needed. For example, I could add a parallel Agent to the material research step to improve network search efficiency.

Finally, if you have better practices to share, feel free to leave comments. The Skill example’s complete workflow is shown in the attached diagram.